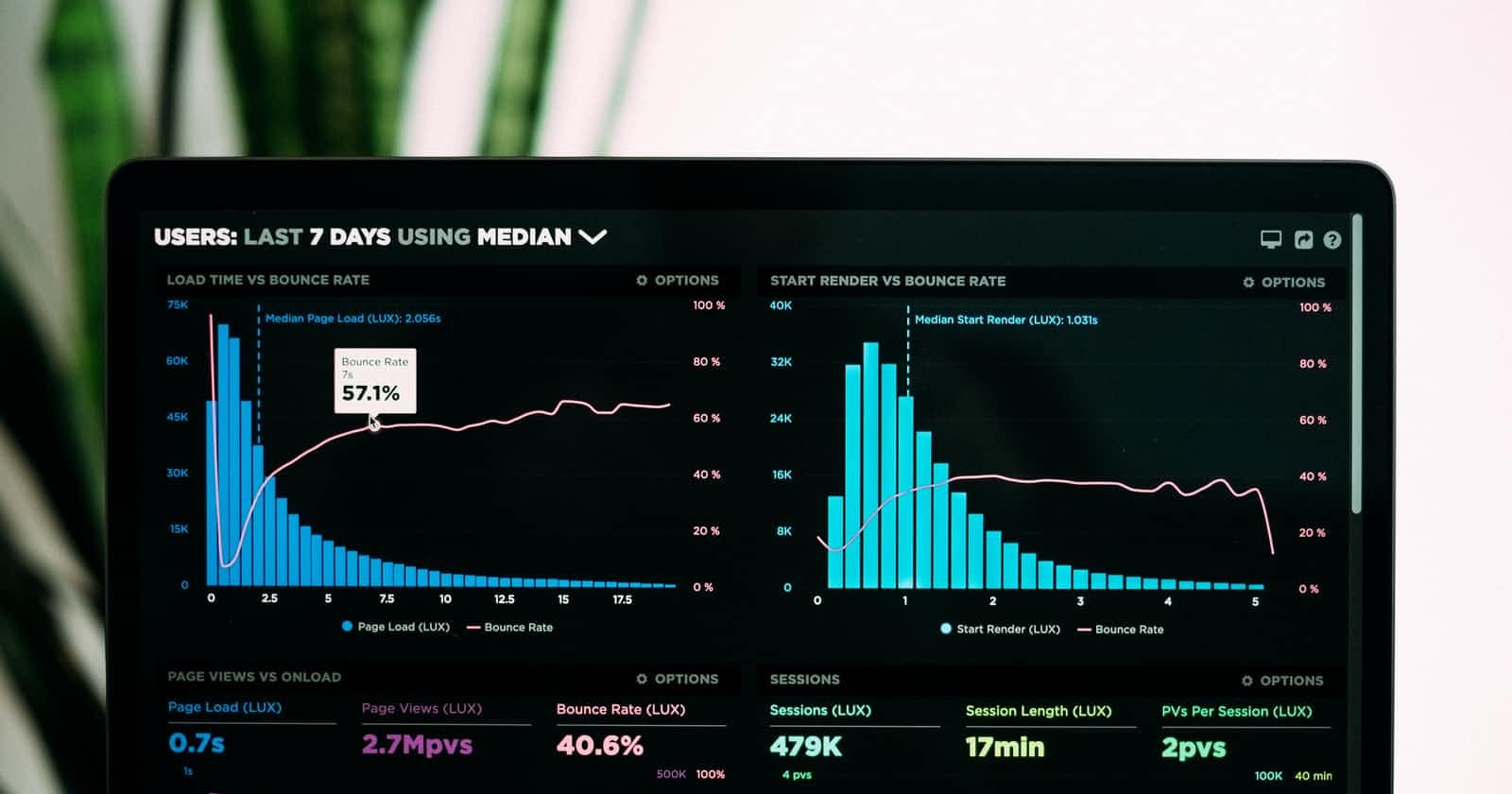

Photo by Luke Chesser on Unsplash

What Is Time Complexity

What is the time complexity analysis in the data structures and algorithms, and how to do it?

Hey👋 folks, welcome to the first article in my new series DSA With Me. In this article, we will learn about Time Complexity. We’ll see what is time complexity, why we need to do a complexity analysis of data structures and algorithms, and how to do it. But, before that a small intro. In this series, we’ll discuss Data structures and Algorithms, and practice problem solving every Friday. I’m not a teacher here; I’m also learning along with you all. Let’s make this a place where we can discuss, learn, and practice. So, if this sounds interesting to you, hop on, follow Dev Dust and let’s learn together.

What is complexity analysis?

In computer science, how to find out which algorithm or data structure is more efficient or faster. How can we compare two algorithms or data structures? We can compare the given algorithms on the basis of speed; the algorithm that completes a given task faster is better. Do you think this will be a legit test to compare our algorithms and find out which one is better?

Nope! We cannot compare the algorithms or data structures like this as it involves other variables like the CPU and memory power of the machine used, the state of the machine when the test is performed, etc. You got the point, we need a test that's not machine and state dependent, we need a test that's universal, and only depends upon the algorithm.

📌Complexity analysis is a technique to estimate the amount of time or space taken by the algorithm for a large amount of input. Complexity analysis is not ambiguous; it's a mathematical concept hence, it can be proved and it's the same for an algorithm irrespective of the machine power and state.

So we can compare two algorithms or data structures on the basis of time complexity and space complexity. Let's see the time complexity in detail.

What is the time complexity?

The time complexity of an algorithm is the amount of time taken by the algorithm to run as the input size grows.

Note: Time complexity is not the time taken by the algorithm to execute on a machine. Time complexity is the function of the length of the input.

The runtime of an algorithm can be different on different machines however, the time complexity of an algorithm is always the same irrespective of the machine. This is the difference between the time complexity and the runtime of an algorithm.

Best, Average, and Worst Case

In most of the algorithms and data structures, it's not possible to give a general time complexity. The time complexity is a function of input hence, it varies according to the input we provide. Let's take an example.

int getArrSum(int[] arr, int size){

if(size%2 == 0){

return 0;

}

int sum = 0;

for(int i=0; I<size; i++){

sum = sum + arr[I];

}

return sum;

}

In the above algorithm, the time complexity will be different with different inputs. If the size of the array is even then the algorithm only does a constant work of checking whether the size is even or not, and returns zero. However, if the size of the array is not even then the algorithm's time complexity will depend linearly upon the input size.

Here we can see, that the best case is when the input size of the array is even. And the worst case is when the input size of the array is odd.

Note: An algorithm is always judged on its worst case. Complexity analysis is done for a large amount of input. An algorithm with an efficient worst case is considered a better algorithm. The same goes for data structures.

Let's see how we express time complexity mathematically.

Asymptotic Notations

Asymptotic Notations are mathematical equations that represent the order of growth (can be time with input size or space with input size) of an algorithm.

There are three main types of asymptotic notations:

- Big O represents the exact or upper bound

- Theta Θ represents the exact bound

- Omega Ω represents the exact or lower bound

To calculate time complexity keep a few things in mind:

- Any operation, comparison, or conditional check performed by the algorithm takes constant time.

- Time complexity is only affected by loops and recursive calls.

- Complexity analysis is done for a large amount of input(size → ∞). Hence, in the asymptotic notations only the variable with the highest order matters.

Big O Notation

It represents the exact or upper bound of the order of growth.

For example,

3n + 10nlog(n) + 3

nlog(n) is the exact order of growth of the above equation. So, we can say that the Big O of the above equation is O(nlogn).

However, O(n²), O(n³), and order of growth higher than this can also be the answer because big O represents exact or upper bound. But, when we have the exact order of growth we use that only.

Mathematical definition and representation:

We say a function f(n) is Big O of g(n) if there exist constants c and m such that

f(n) ≤ cg(n) for all values of n≥m

Omega Notation (Ω)

It represents the exact or lower bound. However, if we can calculate the exact bound then we use that only.

For example,

3n + 10nlog(n) + 3

Here, nlog(n) is the exact order of growth of the above equation. So, we can say that the Omega notation of the above equation is Ω(nlogn).

However, Ω(n), Ω(logn), and order of growth lower than this can also be the answer because Omega notation represents exact or lower bound.

Mathematical definition and representation:

We say a function f(n) is Omega of g(n) if there exist constants c and m such that

f(n) ≥ cg(n) for all values of n≥m

Theta Notation (Θ)

It always represents the exact order of growth. However, algorithms are complex and it's not always possible to calculate the exact order of growth. Hence, to represent time complexity in the theta notation of an algorithm we may have to some assumptions.

Mathematical definition and representation:

We say a function f(n) is theta of g(n) if there exist constants c1, c2, and m such that

c1g(n) ≤ f(n) ≤ c2g(n) for all values of n≥m

Hence

f(n) = Θ(g(n)) if c1g(n) ≤ f(n) ≤ c2g(n) for all values of n≥m

I know, I know it was a lot of information. Let's make it more simple by using what we have learned to calculate the time complexity of an algorithm.

int linearSearch(int[] arr, int key){

for(int i=0;i<arr.length;i++){

if(arr[i] == key){

return i;

}

}

return -1;

}

It's a simple program of linear search in an array. There can be multiple cases in this.

- Best Case: If the element we are searching for in the array is present at the index 0. In this case, the for loop needs to run only once irrespective of the size of the array hence, constant work. This defines the lower bound of the array.

So the time complexity in Omega notation is Ω(1)

- Worst Case: If the element we are searching for is present at the last index of the array or maybe not present at all. In this case, the for loop has to run the number of times equal to the size of the array. Hence the time complexity, in this case, is linearly proportional to the size of the array.

We can use the theta notation to represent time complexity here.

The time complexity in theta notation is Θ(n)

- Average Case: Usually the element we are searching for will lie between 0 - the last index. We don't know for sure where the element is in the array(if it is) and how many times the for loop will run.

In this case, we can use the Big O notation as it provides an upper bound. This means doesn't matter where the element we are searching for is present or not. The time complexity will not be more than the one provided by the Big O notation.

The time complexity in big O notation is O(n)

Resources

If you want to learn more about time complexity, complexity analysis, and asymptotic notations here are some good resources:

Thanks for reading, share this with your friends who are learning data structures and algorithms and let's learn and practice together. Follow Mudit Mishra on Hashnode and subscribe to my email newsletter to get notified as soon as I publish my next article in the DSA With Me series.